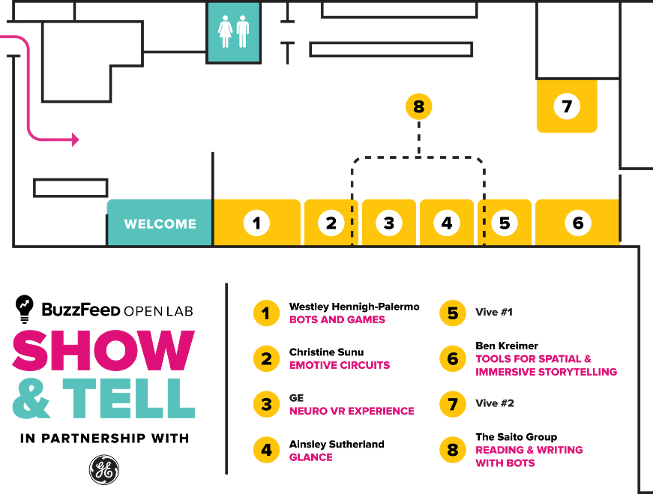

BuzzFeed Open Lab

2016 Show & Tell

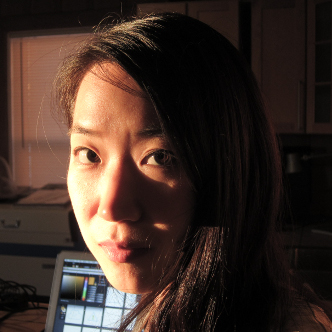

Amanda Hickman

BuzzFeed Open Lab for Journalism, Technology, and the Arts is a workshop in BuzzFeed News’s San Francisco bureau. We offer fellowships to artists and programmers and storytellers to spend a year making new work in a collaborative environment. Read more about the lab.

The lab is led by Amanda Hickman (Director) and Mat Honan (BuzzFeed SF Bureau Chief) and advised by BuzzFeed Founder and CEO Jonah Peretti, James Burns (BuzzFeed Director of Engineering), Chris Dixon (BuzzFeed board member and a16z partner), Chris Anderson (CEO of 3D Robotics and former Wired editor-in-chief), artist and engineer Natalie Jeremijenko, Greg Petroff (GE Global Research), Catherine Bracy (civic technologist and Managing Director of the TechEquity Collaborative), and T Jason Anderson (Associate Professor of Architecture at California College of the Arts, founder of studioAnomalous).

BuzzBot would love to show you around.

- For most people, simply visiting https://m.me/openlab will just work. If it doesn't ...

- If you don’t already have FB Messenger (Free, iOS and Android) on your phone, definitely install it.

- Search for “Openlab” in Messenger, or scan the QR Code by going to the People tab > Scan Code and holding your phone up to this image -->

- Click on “Get Started” or just send us a quick “hello” to get started.

- We'll walk you through the rest from there.

Westley Hennigh-Palermo Open Lab Fellow

Westley is interested in finding new ways for newsrooms to communicate with people and in enabling newsrooms to talk about and grapple with complex systems that don't lend themselves to simple narratives. In the past he has helped build a variety of independent games, educational simulations, and tools for data scientists.

Bots and Games

At the Open Lab Westley has worked on projects including bots, games, and tools for journalists. The largest of these was BuzzBot, a Facebook Messenger bot that BuzzFeed News deployed during the RNC and DNC. BuzzBot facilitated conversation between journalists and thousands of people who were watching from home, protesting outside, or actually attending as delegates. You can chat with BuzzBot tonight and check out other work from Westley including a game about "Shit VCs Say" and an interactive exploration of influence in Congress.

The Saito GroupOpen Lab / Eyebeam Fellow

The Saito Group is a collective of writers, designers and hackers in support of the right to the city, data rights and economic solidarity.

Reading and Writing With Bots

Saito created an open-source tool for geo-located social media listening. The tool scans Twitter and pulls in geo-located tweets, and allows the operator to visualize what is being tweeted within a specified geographical radius. Saito also developed an intelligent auto-suggest system. An operator loads a corpus of text, the keyboard suggests the words the operator could write based on the corpus. Together, these devices scan crowds and write texts based on that crowd’s social media output.

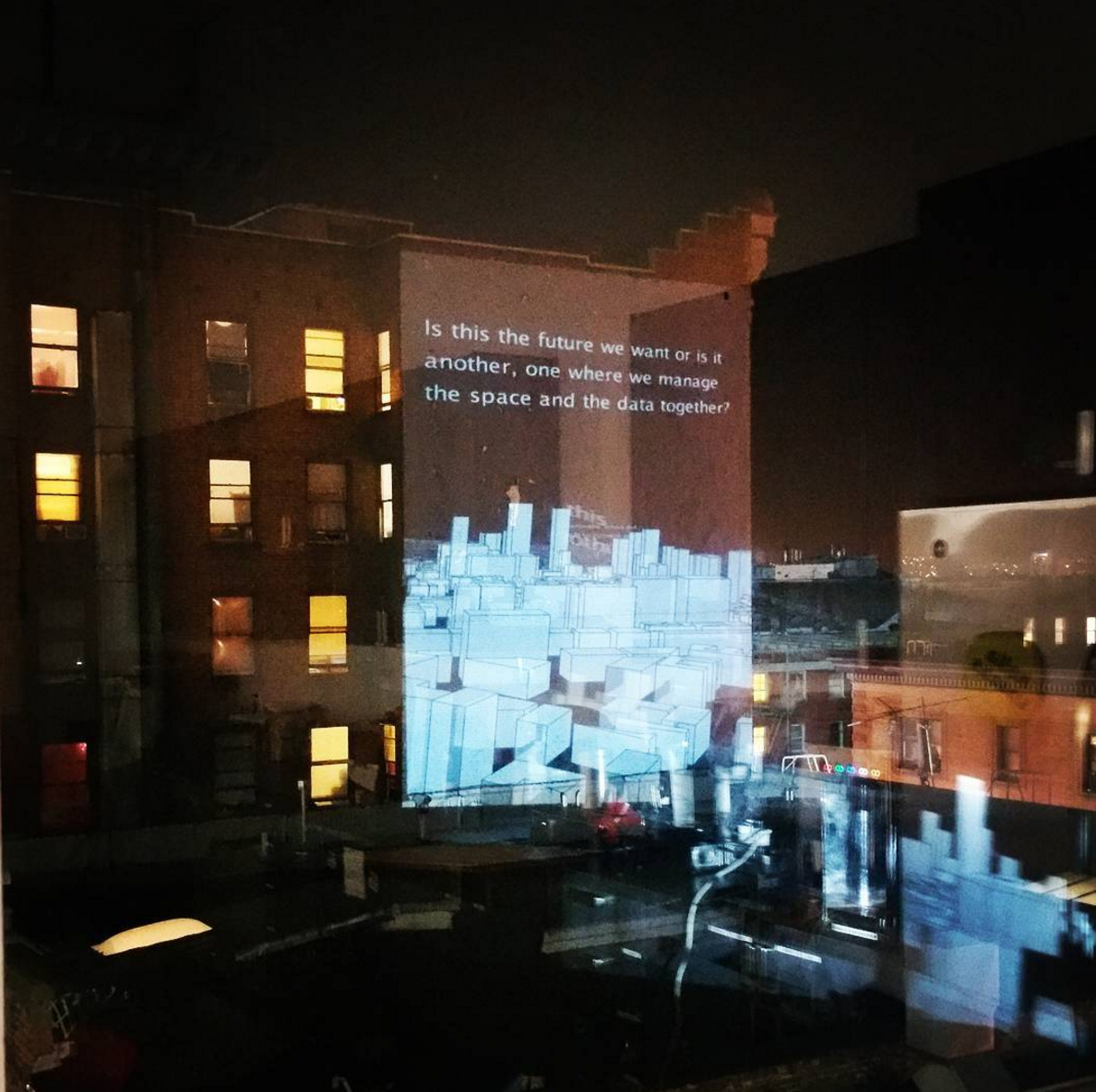

USE CASE: ANTI-EVICTION MAPPING PROJECT

Saito collaborated with the Anti-Eviction Mapping Project to design a digital billboard. The billboard scans Twitter for mentions of evictions within the geographic radius of San Francisco and navigates through a 3D map of San Francisco, stopping at one site of eviction at a time. At each of these sites, one line from a testimony of the eviction would display. As the billboard traversed the city, a poem about displacement emerged as well as a link to the Anti-Eviction Mapping Project.

USE CASE: SWALE FLOATING GARDENS

Saito collaborated with Biome Arts to design a pavilion for Mary Mattingly’s nomadic floating garden, Swale. A sensor network measured information about the environment, including plant-moisture, water-flow, and weather. The Saito Group and Biome Arts designed a projection in the pavilion’s interior to represent the data collected by the sensor network; this data sculpture drew people from the shore to the barge. The Saito Group also implemented a social media monitoring network for the neighborhoods where the barge was docked, so that those interested in food, food policy, and art would know the barge was in their area.

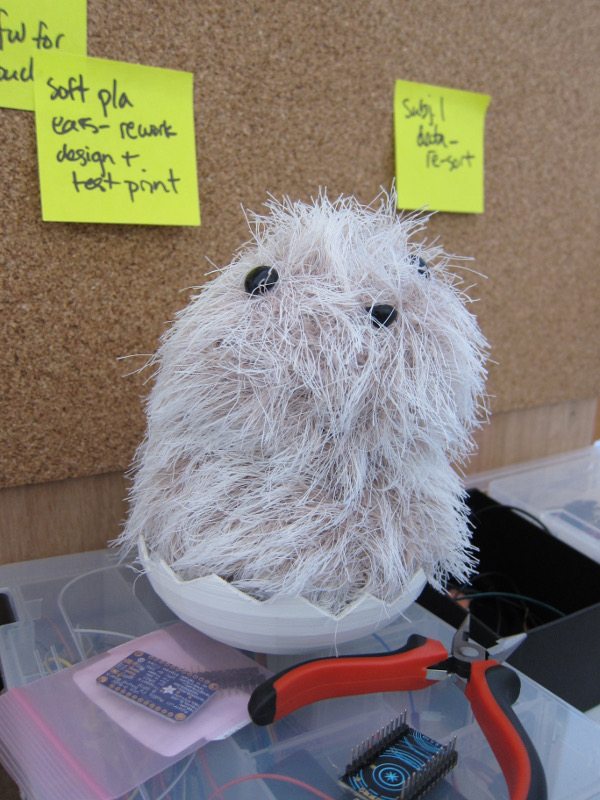

Christine Sunu Open Lab GE Fellow

Christine's research is focused on the links between emotion, design, media, and IoT. She writes and speaks widely about the importance of human connection—to technology, to ourselves, and to others. In the past, Christine has designed developer experiences, run IoT workshops, sequenced human genomes, conducted patient interviews, and killed thousands of fruit flies in the name of science. She currently lives in San Francisco and builds emotive interfaces for internet connected technology.

Emotive Circuits

Emotive Circuits is a collection of objects that showcase internet connected technology in soft and unusual forms. In her research at the Open Lab, Christine has examined the potential of the Internet of Things to augment existing objects of emotional importance and convey information through natural, life-based interfaces. Her interactive collection of plush toys, sculptures, and everyday objects is meant to exemplify this research. Please touch the exhibit.

Ben Kreimer Open Lab Beta Fellow

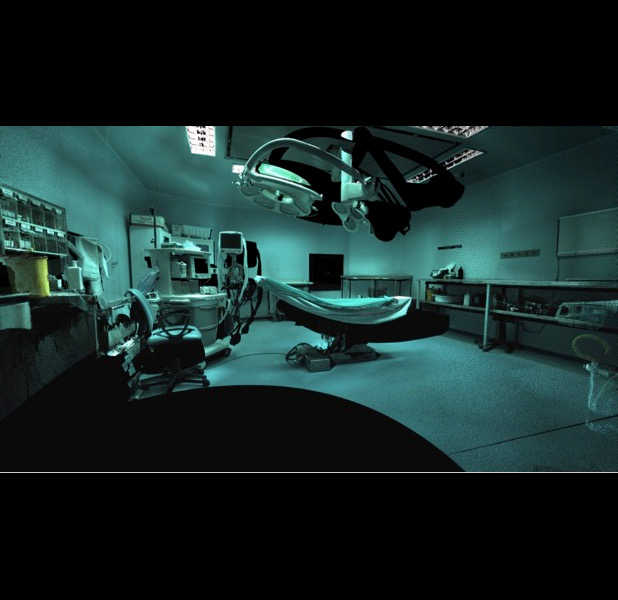

Ben explores the unique perspectives, visual experiences, and storytelling potential of drones, 3D reconstructions, 360-degree video, 3D virtual reality, and open-source hardware sensor platforms. He is an adviser for the Drone Journalism Lab and African skyCAM, and has worked with academic institutions and organizations including Columbia’s Tow Center for Digital Journalism, Times of India, CCTV Africa, VICE News, African Wildlife Foundation, and the Antiochia ad Cragum Archaeological Research Project

Tools for Spatial and Immersive Storytelling

Ben has worked on a range of immersive, interactive, and spatial storytelling projects at the Open Lab, many of them focusing on 360 video. He has built open source, low cost 3D printed 360-video rigs for reporters, and adapted 360 cameras to helmets and a drones. These camera rigs, and content produced on them by Ben and BuzzFeed reporters, are on display. In addition to 360 video projects, Ben has also been experimenting with walkable, three-dimensional virtual reality environments made from photographs captured by consumer drones. In the HTC Vive you can experience a walkable, three-dimensional virtual reality environment of an ancient Roman city archaeological excavation, which was reconstructed entirely from photographs Ben took.

Ainsley Sutherland Open Lab Fellow

Ainsley is working on virtual reality research as a fellow at the BuzzFeed Open Lab. Prior to BuzzFeed, Ainsley has worked as a designer, developer, and producer on virtual reality projects, alternate reality games, the decentralized web, and open government research. Before moving to Berkeley, Ainsley completed her graduate work at MIT in the Imagination, Computation, and Expression lab.

Glance

What if your body is an interface, and the environment is an agent? Ainsley has been working on Glance, a toolkit for interaction in VR that foregrounds the realtime relationship between a user's body and the VR system, and experiments with attention, sound, and embodied cognition. How can we sense the attention of others in a collaborative environment? How can we use these tools to create new types of nonfiction VR work? The prototypes and experiments on display showcase some of the research Ainsley has done towards answering these questions over the past year at the BuzzFeed Open Lab.

Thank You!

We are super indebted to the crack team of assitants, most from California College of the Arts, who really made our showcase run smoothly: Klea Bajala, Bibiana Bauer, Irmak Berktas, Laneya Billingsley, Mariana Camero, Gladia Castro, Raymond Hsia, Emily Landress, Wayne Pao, Vincent Perez, Stephen Sanford, Shar Shahfari, Maisee Xiong.